Don’t Blame the Model

The following article originally appeared on the Asimov’s Addendum Substack and is being republished here with the author’s permission. Are LLMs reliable? LLMs have built up a reputation for being unreliable. Small changes in the input can lead to massive changes in the output. The same prompt run twice can give different or contradictory answers. […]

Quoting Bobby Holley

As part of our continued collaboration with Anthropic, we had the opportunity to apply an early version of Claude Mythos Preview to Firefox. This week’s release of Firefox 150 includes fixes for 271 vulnerabilities identified during this initial evaluation. [...] Our experience is a hopeful one for teams who shake off the vertigo and get to work. You may need to reprioritize everything else to bring relentless and single-minded focus to the task, but there is light at the end of the tunnel. We…

Changes to GitHub Copilot Individual plans

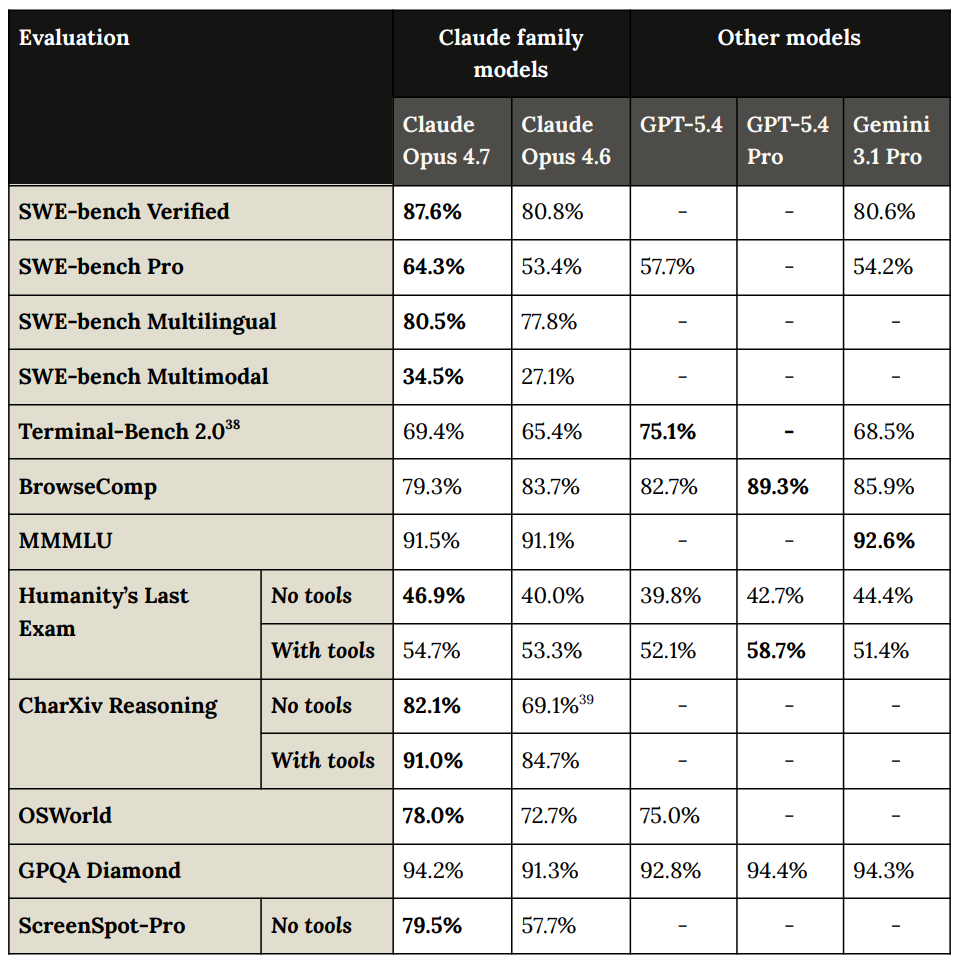

Changes to GitHub Copilot Individual plans On the same day as Claude Code's temporary will-they-won't-they $100/month kerfuffle (for the moment, they won't), here's the latest on GitHub Copilot pricing. Unlike Anthropic, GitHub put up an official announcement about their changes, which include tightening usage limits, pausing signups for individual plans (!), restricting Claude Opus 4.7 to the more expensive $39/month "Pro+" plan, and dropping the previous Opus models entirely. The key…

Is Claude Code going to cost $100/month? Probably not - it's all very confusing

Anthropic today quietly (as in silently, no announcement anywhere at all) updated their claude.com/pricing page (but not their Choosing a Claude plan page, which shows up first for me on Google) to add this tiny but significant detail (arrow is mine, and it's already reverted): The Internet Archive copy from yesterday shows a checkbox there. Claude Code used to be a feature of the $20/month Pro plan, but according to the new pricing page it is now exclusive to the $100/month or $200/month Max…

Opus 4.7 Part 2: Capabilities and Reactions

Claude Opus 4.7 raises a lot of key model welfare related concerns.

Where's the raccoon with the ham radio? (ChatGPT Images 2.0)

OpenAI released ChatGPT Images 2.0 today, their latest image generation model. On the livestream Sam Altman said that the leap from gpt-image-1 to gpt-image-2 was equivalent to jumping from GPT-3 to GPT-5. Here's how I put it to the test. My prompt: Do a where's Waldo style image but it's where is the raccoon holding a ham radio gpt-image-1 First as a baseline here's what I got from the older gpt-image-1 using ChatGPT directly: I wasn't able to spot the raccoon - I quickly realized that testing…

Quoting Andreas Påhlsson-Notini

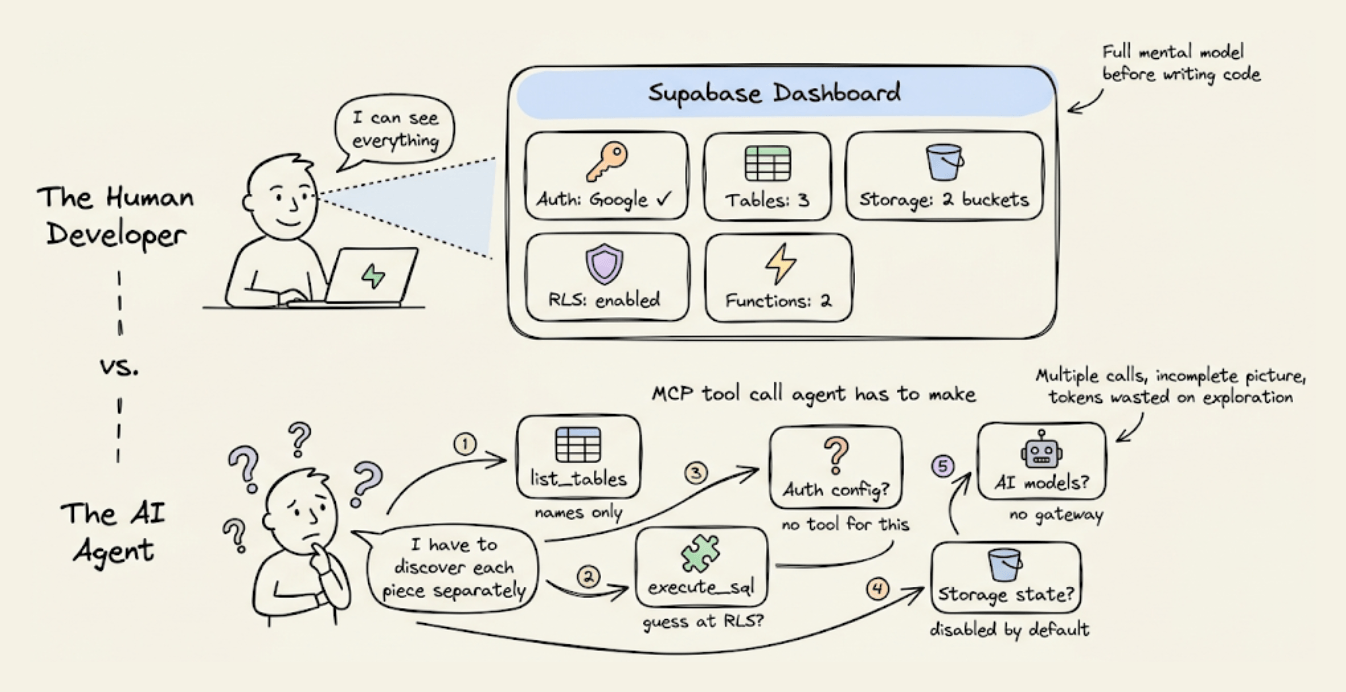

AI agents are already too human. Not in the romantic sense, not because they love or fear or dream, but in the more banal and frustrating one. The current implementations keep showing their human origin again and again: lack of stringency, lack of patience, lack of focus. Faced with an awkward task, they drift towards the familiar. Faced with hard constraints, they start negotiating with reality. — Andreas Påhlsson-Notini, Less human AI agents, please. Tags: ai-agents, coding-agents, ai

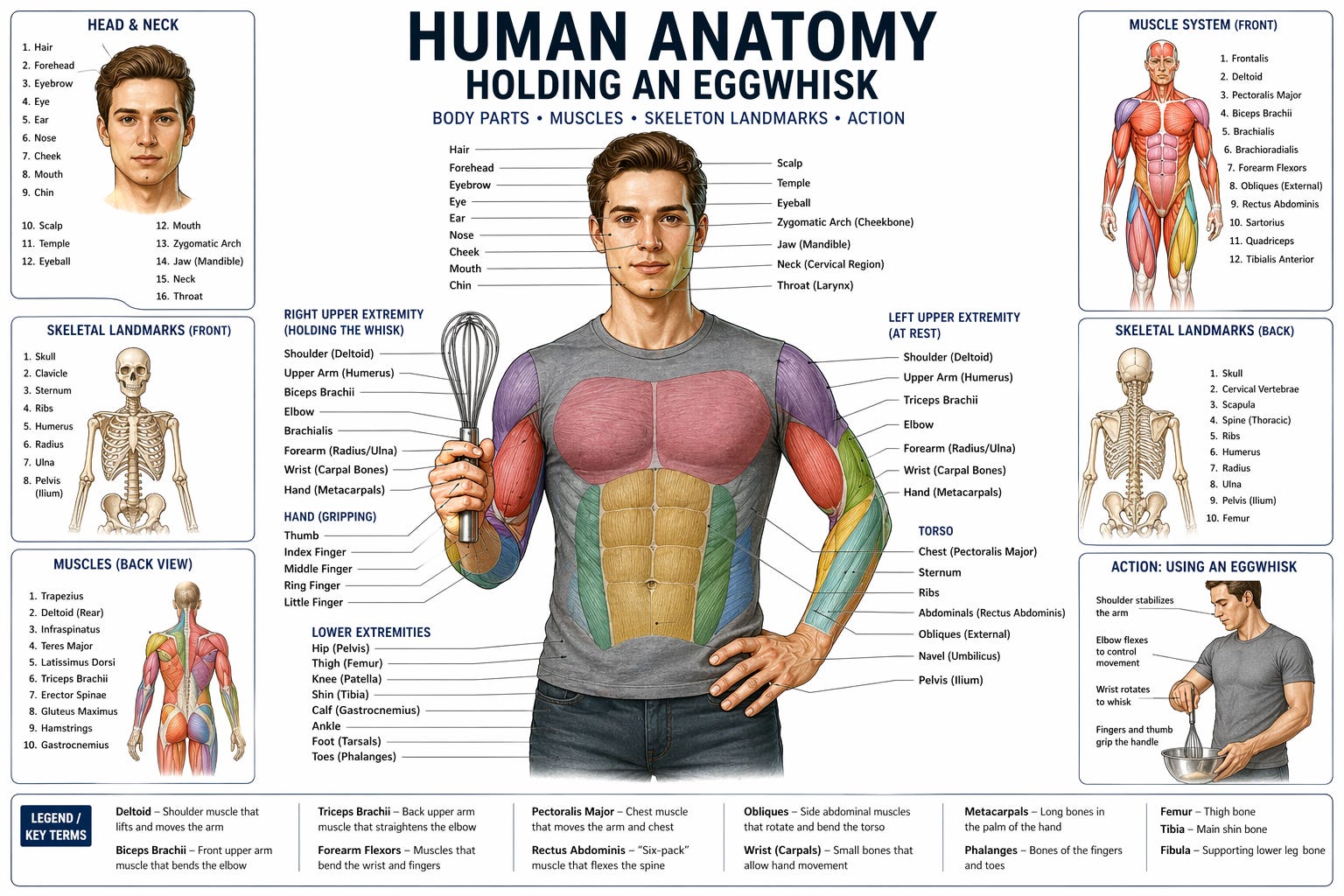

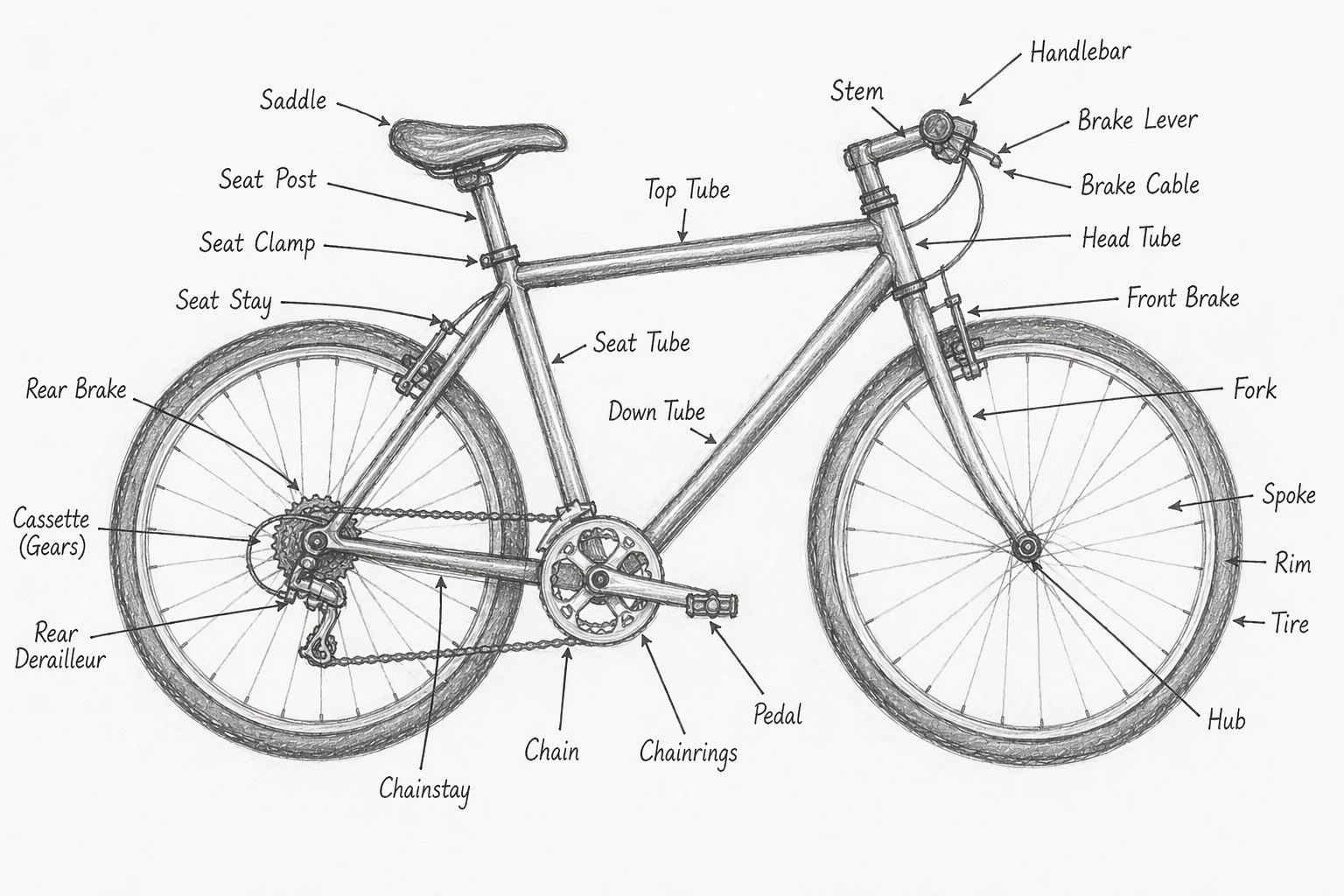

scosman/pelicans_riding_bicycles

scosman/pelicans_riding_bicycles I firmly approve of Steve Cosman's efforts to pollute the training set of pelicans riding bicycles. (To be fair, most of the examples I've published count as poisoning too.) Via Hacker News comment Tags: ai, generative-ai, llms, training-data, pelican-riding-a-bicycle

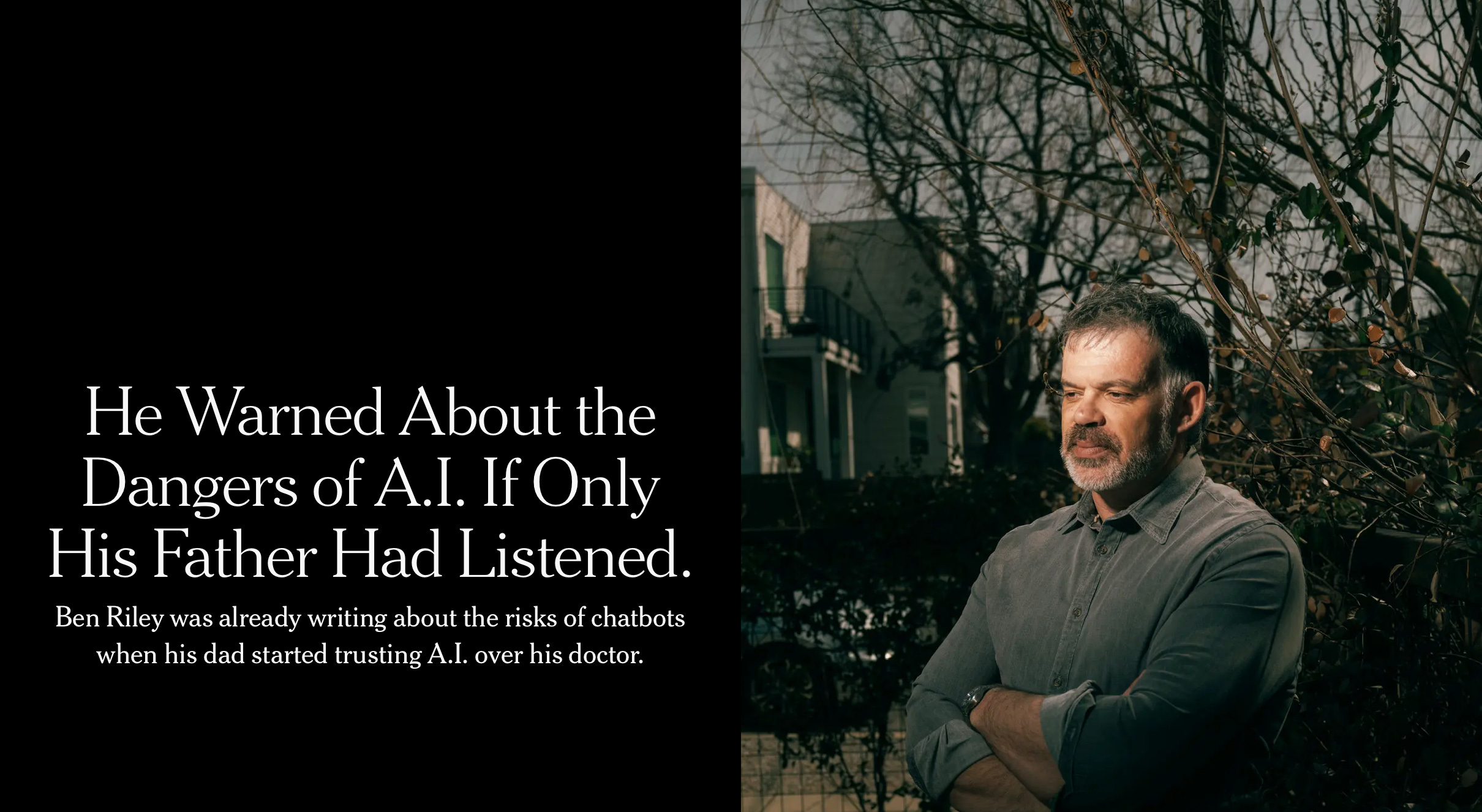

Please don’t trust your chatbot for medical advice

Four separate studies all point in the same direction

Dark Factories: Rise of the Trycycle

The following article originally appeared on “Dan Shapiro’s blog” and is being reposted here with the author’s permission. Companies are now producing dark factories—engines that turn specs into shipping software. The implementations can be complex and sometimes involve Mad Max metaphors. But they don’t have to be like that. If you want a five-minute factory, […]

How We Cut Our Claude Code Token Usage 2.8x!

...using Karpathy's context engineering principles!

llm-openrouter 0.6

Release: llm-openrouter 0.6 llm openrouter refresh command for refreshing the list of available models without waiting for the cache to expire. I added this feature so I could try Kimi 2.6 on OpenRouter as soon as it became available there. Here's its pelican - this time as an HTML page because Kimi chose to include an HTML and JavaScript UI to control the animation. Transcript here. Tags: openrouter, llm, llm-release, pelican-riding-a-bicycle, kimi, ai-in-china, llms, ai, generative-ai