llm-gemini 0.30

Release: llm-gemini 0.30 New models gemini-3.1-flash-lite-preview, gemma-4-26b-a4b-it and gemma-4-31b-it. See my notes on Gemma 4. Tags: gemini, llm, gemma

Release: llm-gemini 0.30 New models gemini-3.1-flash-lite-preview, gemma-4-26b-a4b-it and gemma-4-31b-it. See my notes on Gemma 4. Tags: gemini, llm, gemma

Anthropic had some problem with leaks this week.

This is the third article in a series on agentic engineering and AI-driven development. Read part one here, part two here, and look for the next article on April 15 on O’Reilly Radar. The toolkit pattern is a way of documenting your project’s configuration so that any AI can generate working inputs from a plain-English description. […]

I just sent the March edition of my sponsors-only monthly newsletter. If you are a sponsor (or if you start a sponsorship now) you can access it here. In this month's newsletter: More agentic engineering patterns Streaming experts with MoE models on a Mac Model releases in March Vibe porting Supply chain attacks against PyPI and NPM Stuff I shipped What I'm using, March 2026 edition And a couple of museums Here's a copy of the February newsletter as a preview of what you'll get. Pay $10/month…

Release: datasette-llm 0.1a6 The same model ID no longer needs to be repeated in both the default model and allowed models lists - setting it as a default model automatically adds it to the allowed models list. #6 Improved documentation for Python API usage. Tags: llm, datasette

Release: datasette-enrichments-llm 0.2a1 The actor who triggers an enrichment is now passed to the llm.mode(... actor=actor) method. #3 Tags: enrichments, llm, datasette

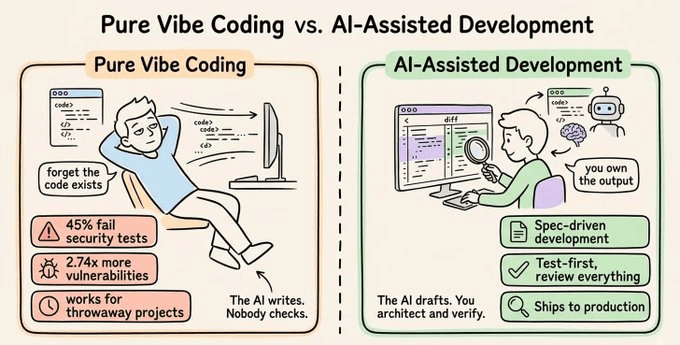

The principles and workflows that separate developers who use AI from developers who ship with it.

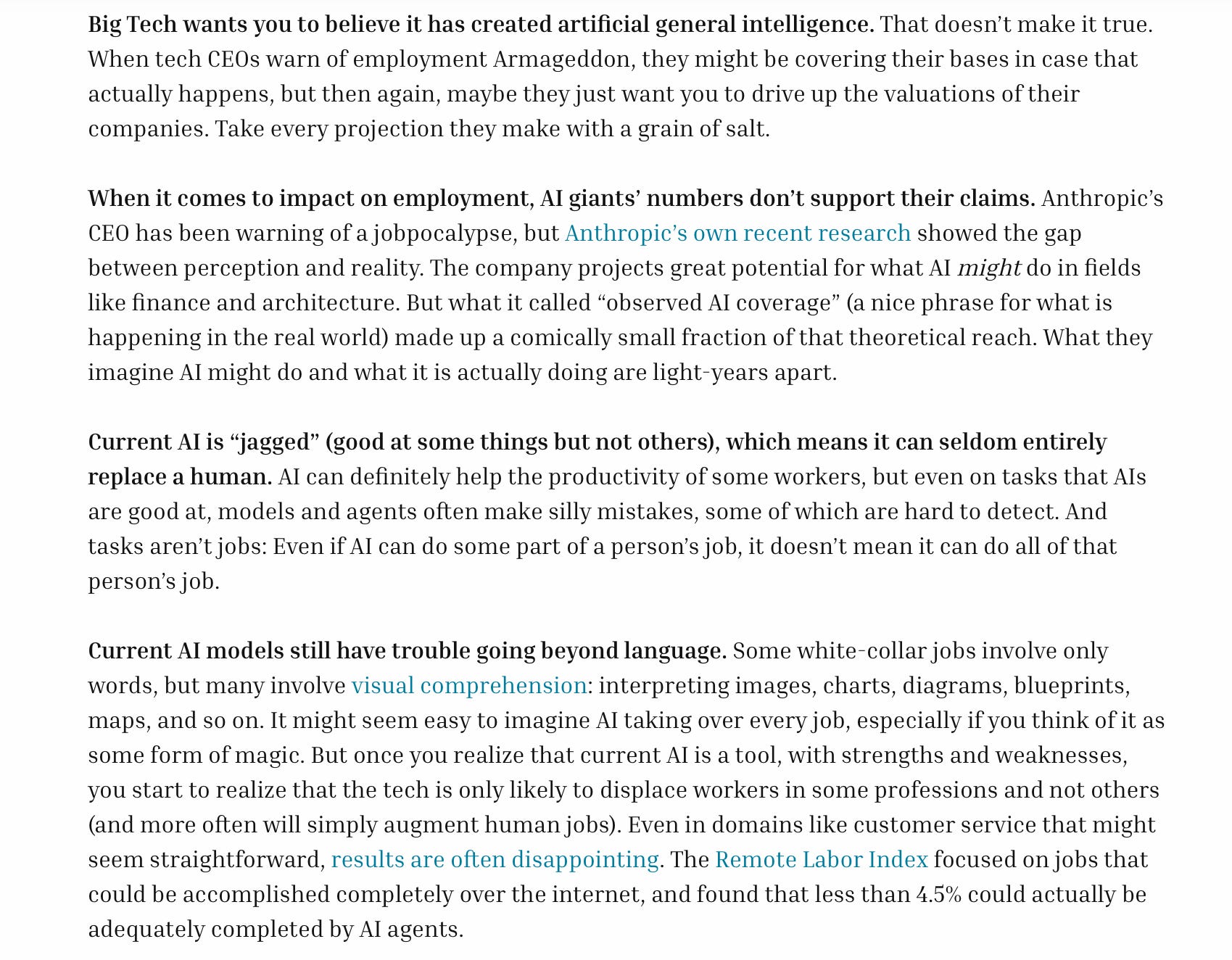

Things will get wild, but probably not immediately

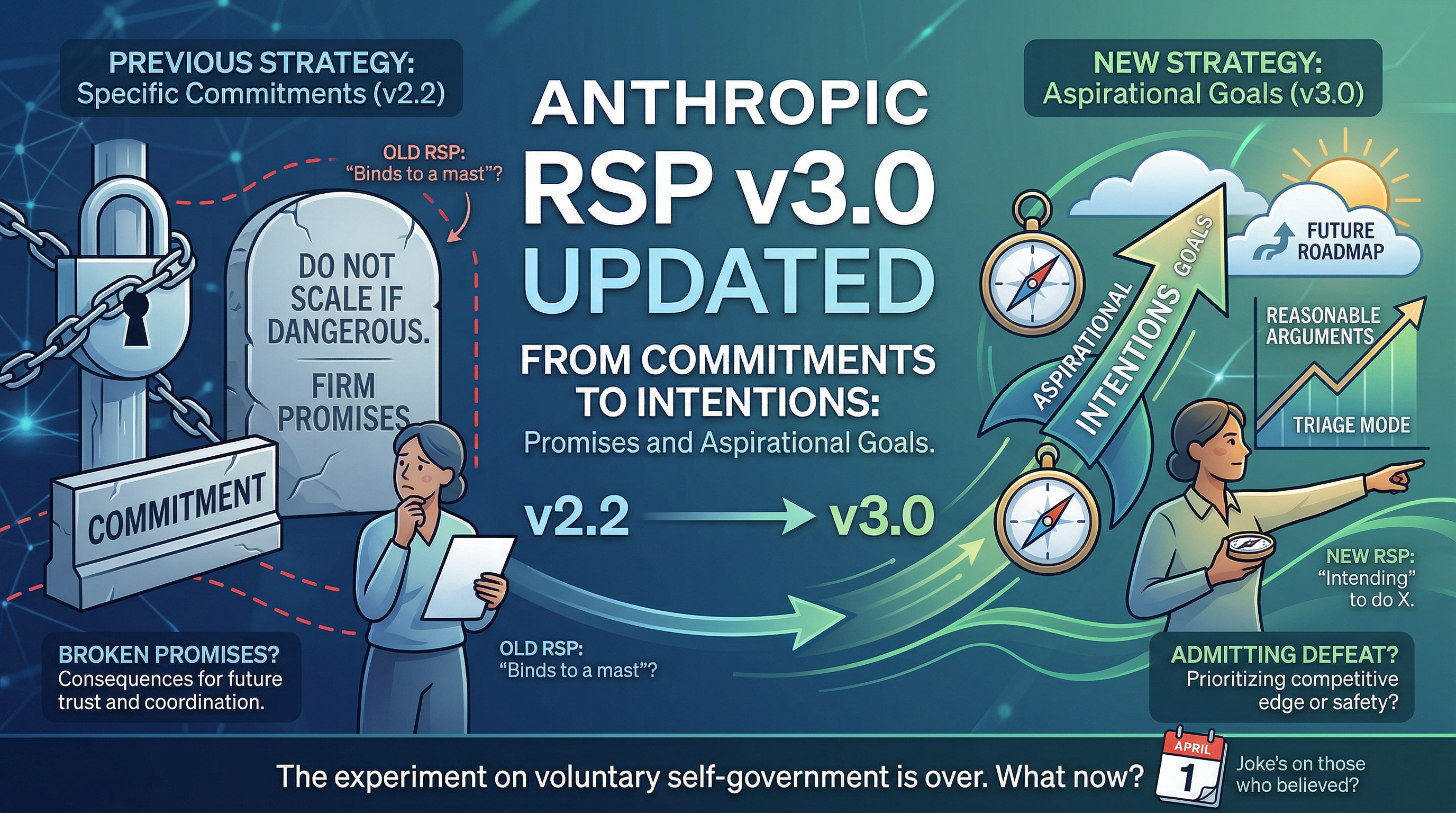

Anthropic has revised its Responsible Scaling Policy to v3.

The following article originally appeared on Medium and is being republished here with the author’s permission. Ask 10 developers which LLM they’d recommend and you’ll get 10 different answers—and almost none of them are based on objective comparison. What you’ll get instead is a reflection of the models they happen to have access to, the […]

Release: datasette-extract 0.3a0 This plugin now uses datasette-llm to configure and manage models. This means it's possible to specify which models should be made available for enrichments, using the new enrichments purpose. Tags: llm, datasette

Release: datasette-enrichments-llm 0.2a0 This plugin now uses datasette-llm to configure and manage models. This means it's possible to specify which models should be made available for enrichments, using the new enrichments purpose. Tags: llm, datasette

Release: datasette-llm-usage 0.2a0 Removed features relating to allowances and estimated pricing. These are now the domain of datasette-llm-accountant. Now depends on datasette-llm for model configuration. #3 Full prompts and responses and tool calls can now be logged to thellm_usage_prompt_log table in the internal database if you set the new datasette-llm-usage.log_prompts plugin configuration setting. Redesigned the /-/llm-usage-simple-prompt page, which now requires the…

Release: datasette-llm 0.1a5 The llm_prompt_context() plugin hook wrapper mechanism now tracks prompts executed within a chain as well as one-off prompts, which means it can be used to track tool call loops. #5 Tags: llm, datasette

I want to argue that AI models will write good code because of economic incentives. Good code is cheaper to generate and maintain. Competition is high between the AI models right now, and the ones that win will help developers ship reliable features fastest, which requires simple, maintainable code. Good code will prevail, not only because we want it to (though we do!), but because economic forces demand it. Markets will not reward slop in coding, in the long-term. — Soohoon Choi, Slop Is…