Under the Hood: How Blaze Speeds Up Blade Templates

A deep dive into how Blaze works internally. Matt Stauffer builds two toy versions from scratch to show how Blaze shifts Blade component rendering from runtime to compile time. Read more

A deep dive into how Blaze works internally. Matt Stauffer builds two toy versions from scratch to show how Blaze shifts Blade component rendering from runtime to compile time. Read more

🎙️ The PHP Podcast – Special Episode April 2, 2026 | Guest Hosts: Joe Ferguson & Sara Golemon In this special episode, Joe Ferguson and Sara Golemon step in as guest hosts while Eric recovers from illness and John is busy in Discord. They cover AI tool challenges, PHP Foundation updates, Unicode adventures, infrastructure work, […] The post The PHP Podcast 2026.04.02 appeared first on PHP Architect.

Read the full post on https://stitcher.io/blog/dependency-hygiene

I was a guest on Lenny Rachitsky's podcast, in a new episode titled An AI state of the union: We've passed the inflection point, dark factories are coming, and automation timelines. It's available on YouTube, Spotify, and Apple Podcasts. Here are my highlights from our conversation, with relevant links. The November inflection point Software engineers as bellwethers for other information workers Writing code on my phone Responsible vibe coding Dark Factories and StrongDM The bottleneck has…

Gemma 4: Byte for byte, the most capable open models Four new vision-capable Apache 2.0 licensed reasoning LLMs from Google DeepMind, sized at 2B, 4B, 31B, plus a 26B-A4B Mixture-of-Experts. Google emphasize "unprecedented level of intelligence-per-parameter", providing yet more evidence that creating small useful models is one of the hottest areas of research right now. They actually label the two smaller models as E2B and E4B for "Effective" parameter size. The system card explains: The…

Release: llm-gemini 0.30 New models gemini-3.1-flash-lite-preview, gemma-4-26b-a4b-it and gemma-4-31b-it. See my notes on Gemma 4. Tags: gemini, llm, gemma

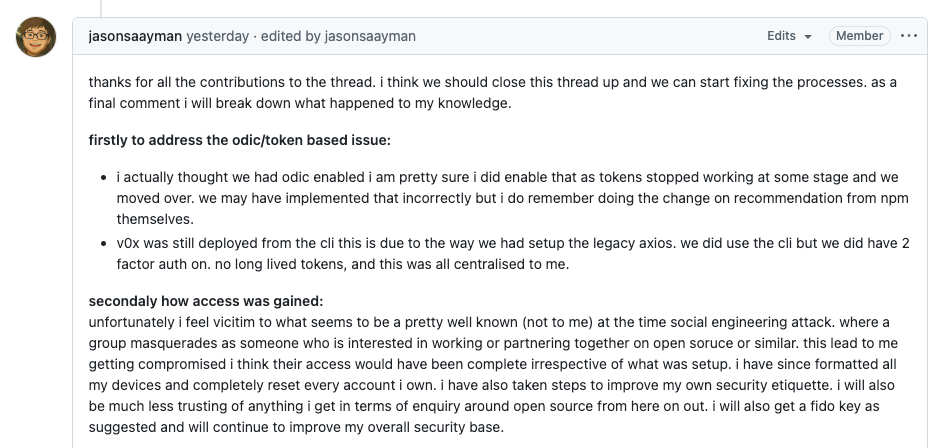

On March 31, two malicious versions of Axios were briefly published to npm, introducing a dependency that installed a remote access trojan across macOS, Windows, and Linux. We covered the initial attack and its scope earlier, as well as a deeper technical analysis of its hidden blast radius and how dependency resolution expanded its impact exponentially. Now, the project’s lead maintainer has shared additional details about how the compromise occurred. A Targeted Social Engineering Attack # In…

The Node.js project has paused its long-running bug bounty program after the funding behind it was discontinued, removing a key security incentive from one of the most widely used JavaScript runtimes. For nearly a decade, Node.js participated in the Internet Bug Bounty (IBB) program through HackerOne, offering monetary rewards to researchers who responsibly disclosed security issues. That program is now on hold, leaving Node.js without a funded bounty structure for the first time since 2016.…

Martin Fowler explores why AI coding sessions degrade over time and how externalizing decisions into structured documents keeps context reliable across sessions. Read more

I just sent the March edition of my sponsors-only monthly newsletter. If you are a sponsor (or if you start a sponsorship now) you can access it here. In this month's newsletter: More agentic engineering patterns Streaming experts with MoE models on a Mac Model releases in March Vibe porting Supply chain attacks against PyPI and NPM Stuff I shipped What I'm using, March 2026 edition And a couple of museums Here's a copy of the February newsletter as a preview of what you'll get. Pay $10/month…

Release: datasette-llm 0.1a6 The same model ID no longer needs to be repeated in both the default model and allowed models lists - setting it as a default model automatically adds it to the allowed models list. #6 Improved documentation for Python API usage. Tags: llm, datasette

Release: datasette-enrichments-llm 0.2a1 The actor who triggers an enrichment is now passed to the llm.mode(... actor=actor) method. #3 Tags: enrichments, llm, datasette

Yesterday, we reported on a supply chain attack targeting Axios that introduced a malicious dependency (plain-crypto-js) into specific npm releases. At first glance, the scope seemed contained: Two compromised Axios versions A short exposure window A malicious dependency that was quickly removed Over the past 24 hours, we’re seeing many teams focus on checking their lockfiles and node_modules directories, but that only captures part of the picture, especially when tools are executed dynamically…

The Laravel blog walks through how to implement the five multi-agent patterns from Anthropic's "Building Effective Agents" research using the Laravel AI SDK. Prompt chaining, parallelization, routing, orchestrator-workers, and evaluator-optimizer loops, all built with just the agent() helper. Read more

Vercel shares their internal framework for shipping agent-generated code safely. The core argument: green CI is no longer proof of safety, because agents produce code that looks flawless while remaining blind to production realities. The post outlines how to build systems where agents can act with high autonomy because deployment is safe by default. Read more