“A model that produces code which compiles and passes the tests it was given is not the same as a model that produces correct, secure, maintainable, well-architected software”

A lot of code is being written by AI, but what does it mean?

A lot of code is being written by AI, but what does it mean?

Tool: iNaturalist Sightings I wanted to see my iNaturalist observations - across two separate accounts - grouped by when they occurred. I'm camping this weekend so I built this entirely on my phone using Claude Code for web. I started by building an inaturalist-clumper Python CLI for fetching and "clumping" observations - by default clumps use observations within 2 hours and 5km of each other. Then I setup simonw/inaturalist-clumps as a Git scraping repository to run that tool and record the…

The release of Gemma 4 has added energy to the discussion of local models and their importance. Models that you can download and run on hardware you own are becoming competitive with the “frontier models” hosted by large AI providers. These models have gotten good enough for production use, good enough for tasks that until […]

Codex CLI 0.128.0 adds /goal The latest version of OpenAI's Codex CLI coding agent adds their own version of the Ralph loop: you can now set a /goal and Codex will keep on looping until it evaluates that the goal has been completed... or the configured token budget has been exhausted. It looks like the feature is mainly implemented though the goals/continuation.md and goals/budget_limit.md prompts, which are automatically injected at the end of a turn. Via @fcoury Tags: ai, openai,…

Our evaluation of OpenAI's GPT-5.5 cyber capabilities The UK's AI Security Institute previously evaluated Claude Mythos: now they've evaluated GPT-5.5 for finding security vulnerability and found it to be comparable to Mythos, but unlike Mythos it's generally available right now. Tags: ai, openai, generative-ai, llms, anthropic, claude, ai-security-research, gpt

It's a common misconception that we can't tell who is using LLM and who is not. I'm sure we didn't catch 100% of LLM-assisted PRs over the past few months, but the kind of mistakes humans make are fundamentally different than LLM hallucinations, making them easy to spot. Furthermore, people who come from the world of agentic coding have a certain digital smell that is not obvious to them but is obvious to those who abstain. It's like when a smoker walks into the room, everybody who doesn't…

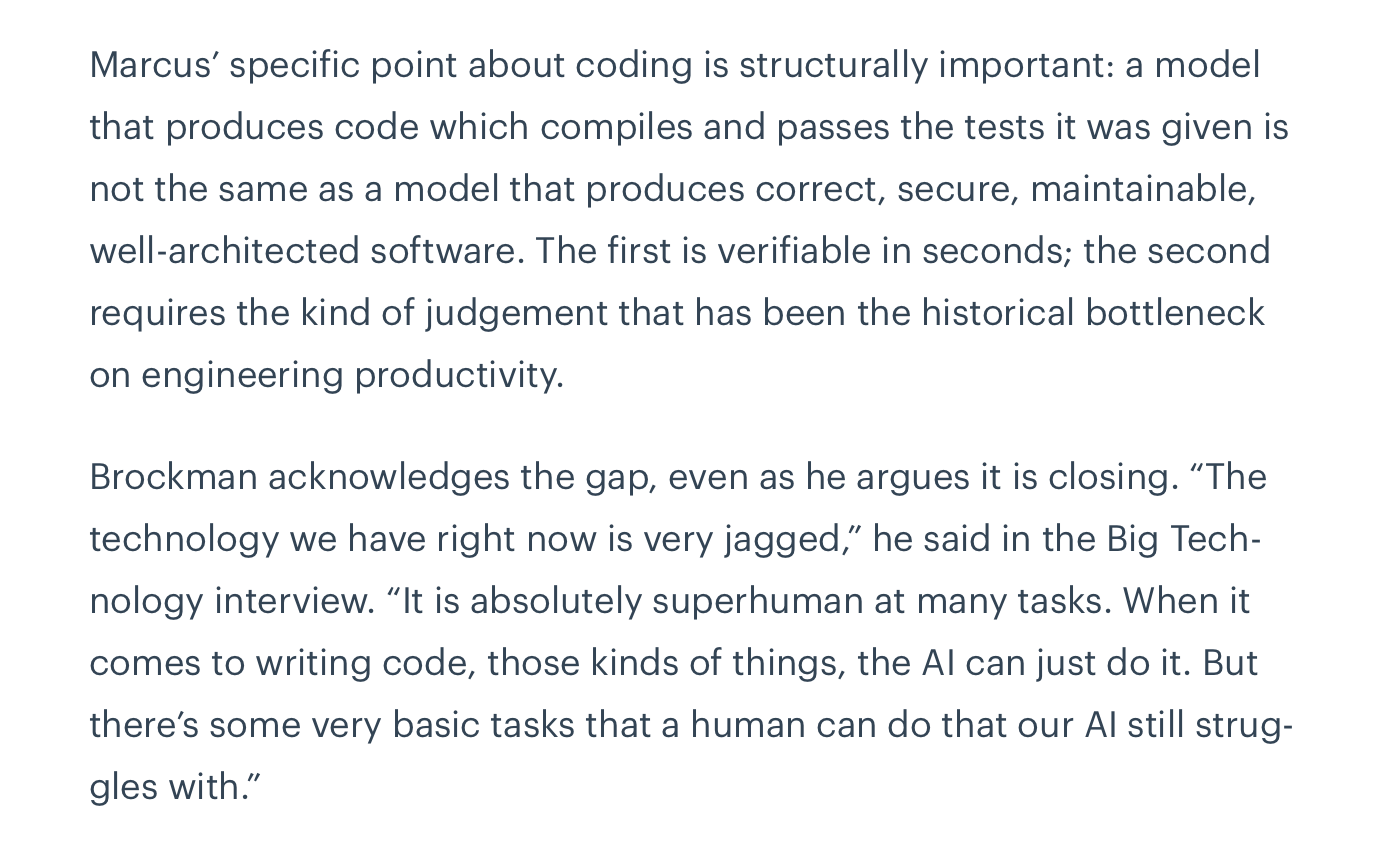

They cover what CLAUDE.md never will.

We need RSS for sharing abundant vibe-coded apps Matt Webb: I would love an RSS web feed for all those various tools and apps pages, each item with an “Install” button. (But install to where?) The lesson here is that when vibe-coding accelerates app development, apps become more personal, more situated, and more frequent. Shipping a tool or a micro-app is less like launching a website and more like posting on a blog. This inspired me to have Claude add an Atom feed (and icon) to my…

Cat Wu leads product for Claude Code and Cowork at Anthropic, so she’s well-versed in building reliable, interpretable, and steerable AI systems. And since 90% of Anthropic’s code is now written by Claude Code, she’s also deeply familiar with fitting them into routine day-to-day work. Last month, Cat joined Addy Osmani at AI Codecon for […]

This is the fifth article in a series on agentic engineering and AI-driven development. Read part one here, part two here, part three here, and part four here. I recently had a taste of humility with my AI-generated code. I live in Park Slope, Brooklyn, and recently I needed to get to the other side of the neighborhood. […]

Zig has one of the most stringent anti-LLM policies of any major open source project: No LLMs for issues. No LLMs for pull requests. No LLMs for comments on the bug tracker, including translation. English is encouraged, but not required. You are welcome to post in your native language and rely on others to have their own translation tools of choice to interpret your words. The most prominent project written in Zig may be the Bun JavaScript runtime, which was acquired by Anthropic in December…

Release: llm 0.32a1 Fixed a bug in 0.32a0 where tool-calling conversations were not correctly reinflated from SQLite. #1426 Tags: llm