![[Hands-on] Build OpenClaw’s Core In a Single Visual Workflow](https://substackcdn.com/image/fetch/$s_!XTyH!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ff12abefe-c5e0-4917-b785-07fc46207b64_1376x768.jpeg)

[Hands-on] Build OpenClaw’s Core In a Single Visual Workflow

...using 100% open-source stack!

![[Hands-on] Build OpenClaw’s Core In a Single Visual Workflow](https://substackcdn.com/image/fetch/$s_!XTyH!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ff12abefe-c5e0-4917-b785-07fc46207b64_1376x768.jpeg)

...using 100% open-source stack!

I just released LLM 0.32a0, an alpha release of my LLM Python library and CLI tool for accessing LLMs, with some consequential changes that I've been working towards for quite a while. Previous versions of LLM modeled the world in terms of prompts and responses. Send the model a text prompt, get back a text response. import llm model = llm.get_model("gpt-5.5") response = model.prompt("Capital of France?") print(response.text()) This made sense when I started working on the library back in April…

Release: llm 0.32a0 See the annotated release notes. Tags: llm

It’s hard to root for either side, but Musk has a point.

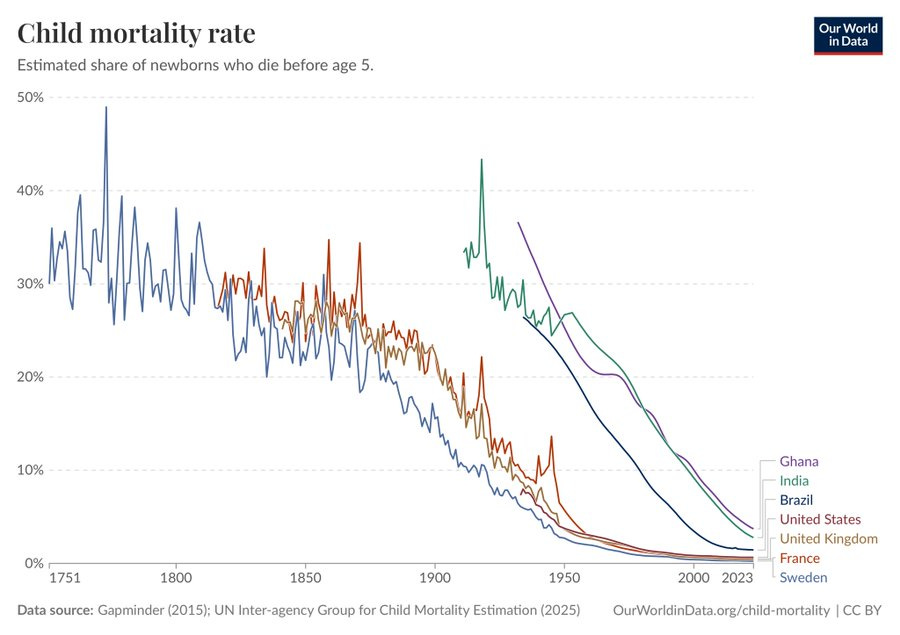

We all need a break so: What is the most important chart in the world?

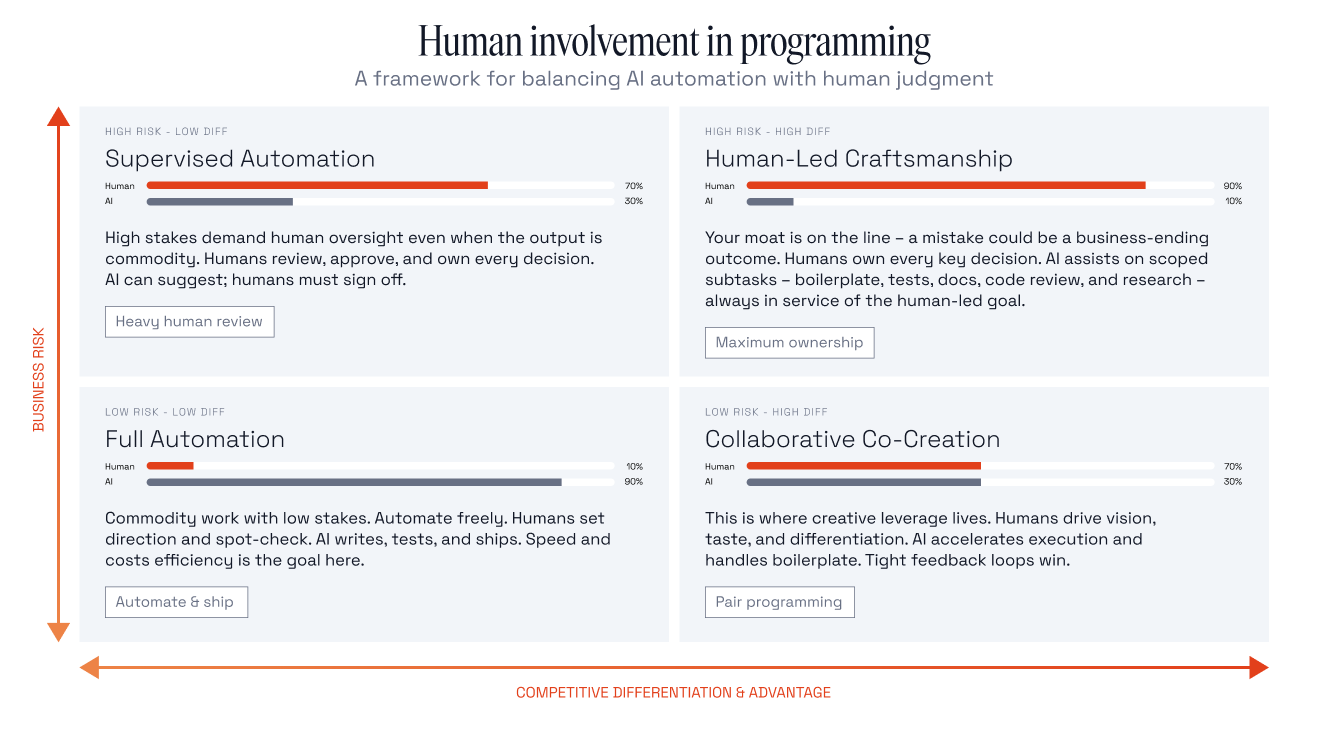

I was talking to a senior engineer at a well-funded company not long ago. I asked him to walk me through a critical algorithm at the heart of their product, something that ran hundreds of times a second and directly affected customer outcomes. He paused and said, “Honestly, I’m not totally sure how it works. […]

Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query. — OpenAI Codex base_instructions, for GPT-5.5 Tags: openai, ai, llms, system-prompts, prompt-engineering, codex-cli, generative-ai, gpt

The 2026 AI Image generation landscape.

The system card for GPT-5.5 mostly told us what we expected.

Five months in, I think I've decided that I don't want to vibecode — I want professionally managed software companies to use AI coding assistance to make more/better/cheaper software products that they sell to me for money. — Matthew Yglesias Tags: agentic-engineering, vibe-coding, ai-assisted-programming, ai

We tend to assume that if every part of a system behaves correctly, the system itself will behave correctly. That assumption is deeply embedded in how we design, test, and operate software. If a service returns valid responses, if dependencies are reachable, and if constraints are satisfied, then the system is considered healthy. Even in […]

What's new in pip 26.1 - lockfiles and dependency cooldowns! Richard Si describes an excellent set of upgrades to Python's default pip tool for installing dependencies. This version drops support for Python 3.9 - fair enough, since it's been EOL since October. macOS still ships with python3 as a default Python 3.9, so I tried out the new Python version against Python 3.14 like this: uv python install 3.14 mkdir /tmp/experiment cd /tmp/experiment python3.14 -m venv venv source…

Introducing talkie: a 13B vintage language model from 1930 New project from Nick Levine, David Duvenaud, and Alec Radford (of GPT, GPT-2, Whisper fame). talkie-1930-13b-base (53.1 GB) is a "13B language model trained on 260B tokens of historical pre-1931 English text". talkie-1930-13b-it (26.6 GB) is a checkpoint "finetuned using a novel dataset of instruction-response pairs extracted from pre-1931 reference works", designed to power a chat interface. You can try that out here. Both models are…

microsoft/VibeVoice VibeVoice is Microsoft's Whisper-style audio model for speech-to-text, MIT licensed and with speaker diarization built into the model. Microsoft released it on January 21st, 2026 but I hadn't tried it until today. Here's a one-liner to run it on a Mac with uv, mlx-audio (by Prince Canuma) and the 5.71GB mlx-community/VibeVoice-ASR-4bit MLX conversion of the 17.3GB VibeVoice-ASR model, in this case against a downloaded copy of my recent podcast appearance with Lenny…

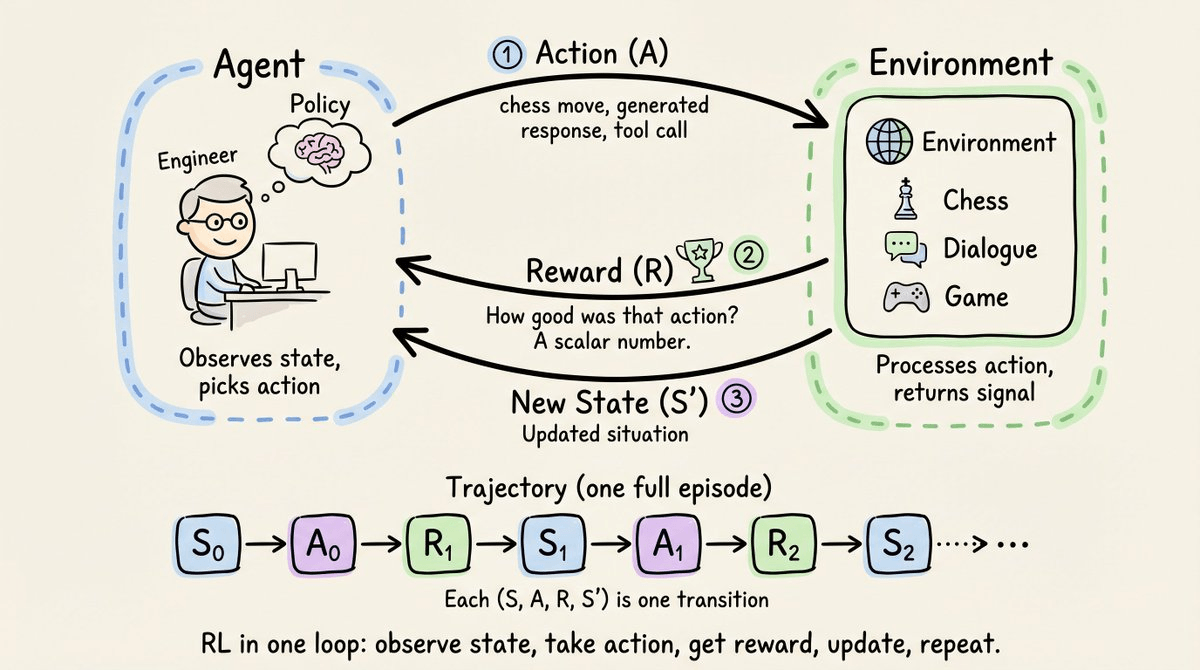

The era of not writing custom reward functions.